Successful event outcomes, unusual web traffic, and the psychology of motivation

The other day, I noticed a weird periodic surge of interest in one of my blog posts. Every January 1, page views for this post—but no other—spiked way up. They stayed high for 7 – 10 days. Then they went back to normal year-round levels.

It took some head-scratching before I finally realized what was going on. The article describes an obscure method for quickly deleting all emails on Apple devices—something Apple didn’t make easy until recently. Apparently, every January thousands of people all over the world stare at the 6,000 emails stuck on their iPhones. They resolve that this is the time they’re finally going to clean them up. So they Google “delete mail”, and find my highly ranked post (currently, out of 228 million results I’m #2). They click on it, and, voilà, lots of page views.

Well, lots of page views for a week or so. Then, what I call the New Year’s Resolutions Effect becomes…well, ineffective. People forget about their New Year’s resolutions and go on with their lives.

Why we are so poor at keeping resolutions

Why are we so poor at keeping resolutions? While scientific research into the psychology of motivation doesn’t currently offer a definitive explanation, there are some plausible theories. One of them, nicely explained by psychologist Tom Stafford, is proposed by George Ainslie in his book Breakdown of Will (read a forty-page “précis” here).

As Tom puts it:

“…our preferences are unstable and inconsistent, the product of a war between our competing impulses, good and bad, short and long-term. A New Year’s resolution could therefore be seen as an alliance between these competing motivations, and like any alliance, it can easily fall apart.

—Tom Stafford, How to formulate a good resolution

And to make a long story short, he shares this consequence of Ainslie’s theory:

“…if you make a resolution, you should formulate it so that at every point in time it is absolutely clear whether you are sticking to it or not. The clear lines are arbitrary, but they help the truce between our competing interests hold.”

For years, I’ve used this observation to create better event outcomes. Here’s what I do.

If you’ve done a good job, by the close of your event participants will be fired up, ready to implement good ideas they’ve heard and seen. This is prime time for them to make resolutions to make changes in their professional lives. So how can we maximize the likelihood they will make good resolutions—and keep them?

A personal introspective

Close to the end of my events, I use a personal introspective to give every attendee an opportunity to explore changes they may want to make in their life and work as a result of their experiences during the conference. (For full details of how to hold a personal introspective, see my book The Power of Participation: Creating Conferences That Deliver Learning, Connection, Engagement, and Action.)

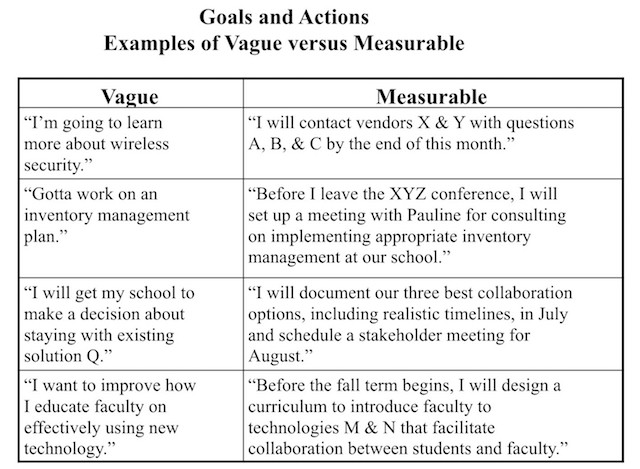

At the start of the personal introspective, each attendee writes down (privately) the changes they want to make. Before they do so, I explain a crucial question they will need to answer later in the process: “How will you know when these changes happen?” I give them several relevant examples of vague versus measurable goals and actions, like those below.

It turns out that including the question “How will you know when these changes happen?” and giving relevant examples beforehand is very important. If you don’t, I’ve learned that hardly anyone will come up with measurable resolutions that make it crystal clear whether you are succeeding or not.

Even with the directions and support, some people find it very difficult to come up with measurable, time-bound answers. This is one of the reasons why every personal introspective has a follow-up small group component. There, they can share and get help on their goals. But that’s material for another blog post.

Over the years I’ve received enough feedback about the effectiveness of personal introspectives to know they can be a powerful tool for better event outcomes. As predicted by the psychology of motivation, helping participants make specific, measurable, and time-bound resolutions that are easier to keep is a vital component.

Photo attribution: Flickr user chrish_99